This month I spent some time in the company of Tobias Mayer, author of "The People's Scrum" which is a collection of writings from his blog posts, grouped into themes, that speak about ideas, topics and challenges around organisation's transformation along the scrum journey, driving home a striking message that time's are changing, a silent revolution is brewing.

I chanced upon this book by accident, browsing some tweets on #agile, saw a picture of the book cover, and it struck me as odd and interesting. Have never heard of Tobias Mayer before, I was intrigued - decided to follow him on Twitter, and buy the book on impulse. Mind you, it was really good that I did!

Tobias' style of writing is literally quite deep: written with words of sincerity, openness and passion, he cuts to the core of uncomfortable-but-so-relevant truths. He writes with a depth of experience that is so poignant that it forces you to think hard about the course you're on, the things you just accept and take for granted.

I was taken on quite a roller coaster ride, experiencing moments of pure resonation thinking I am on the same wavelength as this guy (I'm not that weird after all, just been the odd one out in most of my workplaces), riding high, in-phase, I'm on the right track!!

Yet also, there were instances when I felt a little edgy, somewhat uncomfortable, noticeably shifting my position as I lay in bed reading at night. Stopping, putting the book aside, sleep over it. I have just started my stint into consulting, not a specific agile coach per se, it is one tool in my toolbox of consulting ;-) so it was enlightening & awakening at the same time to see what could be in store for me personally (i.e. self-realisation of what true happiness means, does the road to consultancy end in permanent employment I wonder?) as well as professionally (much of the experiences shared by Mayer rings a bell as I've experienced similar).

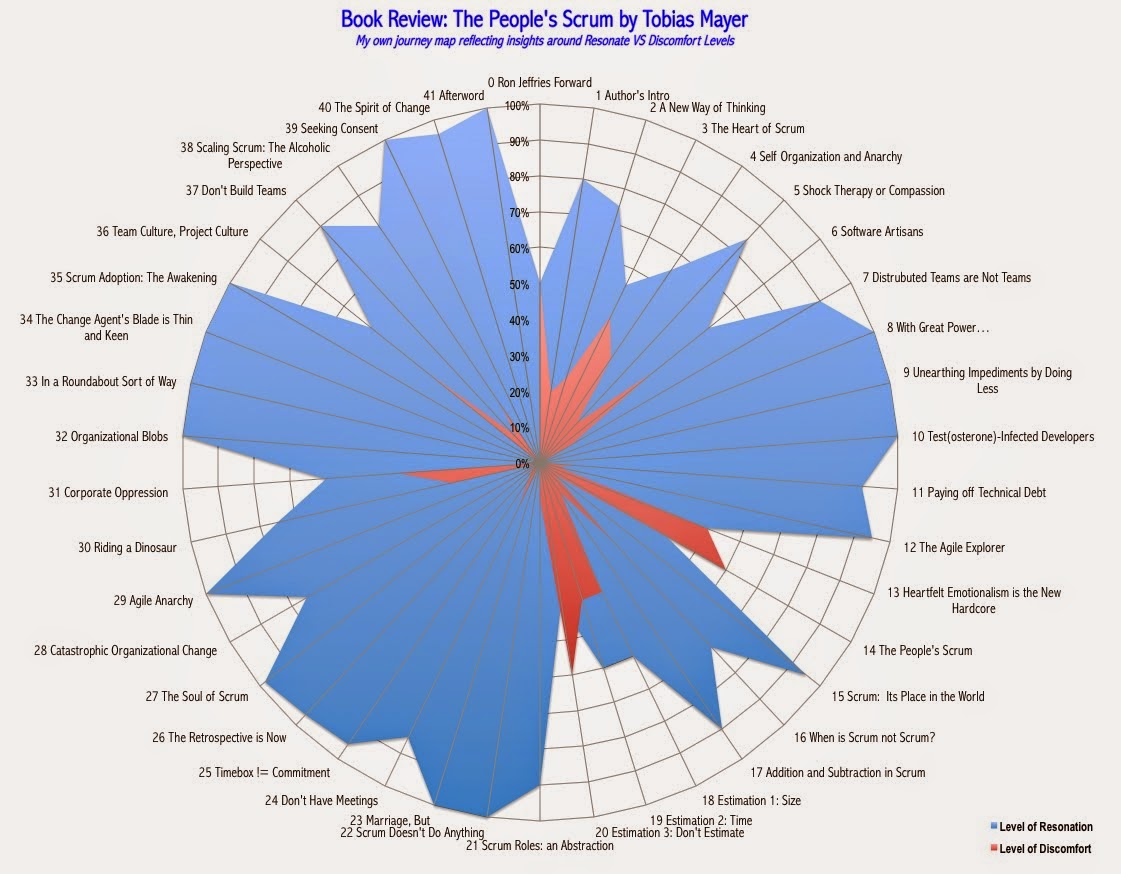

Being deeply touched by the nature of this book and Mayer's genuine disclosure of personal experiences, I decided to take a chance and do somewhat of a different book review. Because the topics struck certain nerves, either resonating (fully in agreement with Mayer) or feeling of discomfort (not sure, not convinced), I thought, let me present a review based on a picture that describes these feelings - so I graphed something that looks like this:

|

I assessed my feelings in almost real time as I read each article - I didn't spend much time processing and deep thinking, debating or self-reflecting in too much detail. I responded with gut feel, instincts, and of course, the life/work experiences I've had along the way - take it as a rough first-cut!

Here's the detail of these comments, for each article (I've not had the time to break these into separate links yet): In what follows, read as:

Section, Title, Level of Resonance, Level of Discomfort, Comments